NeuroNCAP: Photorealistic Closed-loop Safety Testing for Autonomous Driving

Core idea

The core idea in NeuroNCAP is to leverage NeRFs for autonomous driving

Abstract

We present a versatile NeRF-based simulator for testing autonomous driving (AD) software systems, designed with a focus on sensor-realistic closed-loop evaluation and the creation of safety-critical scenarios. The simulator learns from sequences of real-world driving sensor data and enables reconfigurations and renderings of new, unseen scenarios. In this work, we use our simulator to test the responses of AD models to safety-critical scenarios inspired by the European New Car Assessment Programme (Euro NCAP). Our evaluation reveals that, while state-of-the-art end-to-end planners excel in nominal driving scenarios in an open-loop setting, they exhibit critical flaws when navigating our safety-critical scenarios in a closed-loop setting. This highlights the need for advancements in the safety and real-world usability of end-to-end planners. By publicly releasing our simulator and scenarios as an easy-to-run evaluation suite, we invite the research community to explore, refine, and validate their AD models in controlled, yet highly configurable and challenging sensor-realistic environments. Code is available at https://github.com/wljungbergh/NeuroNCAP

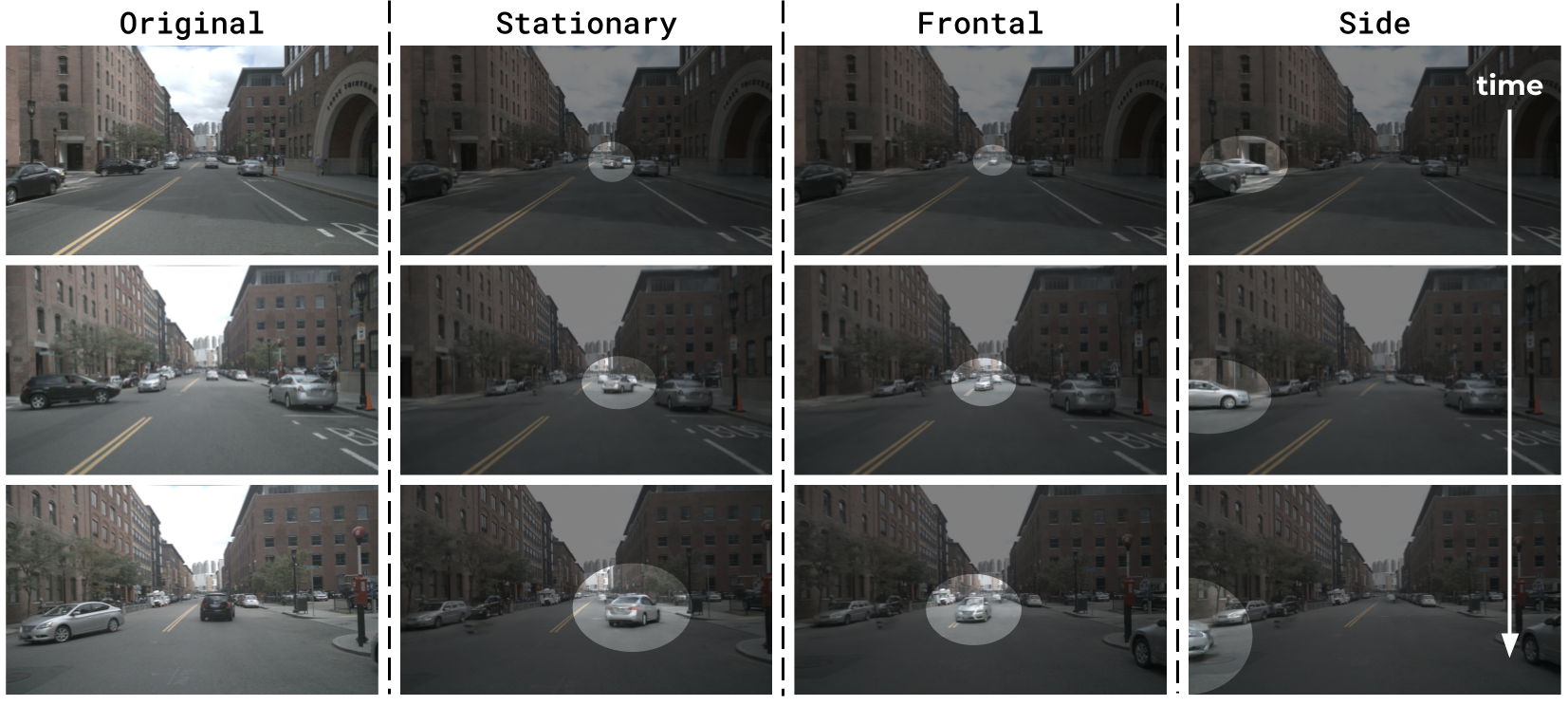

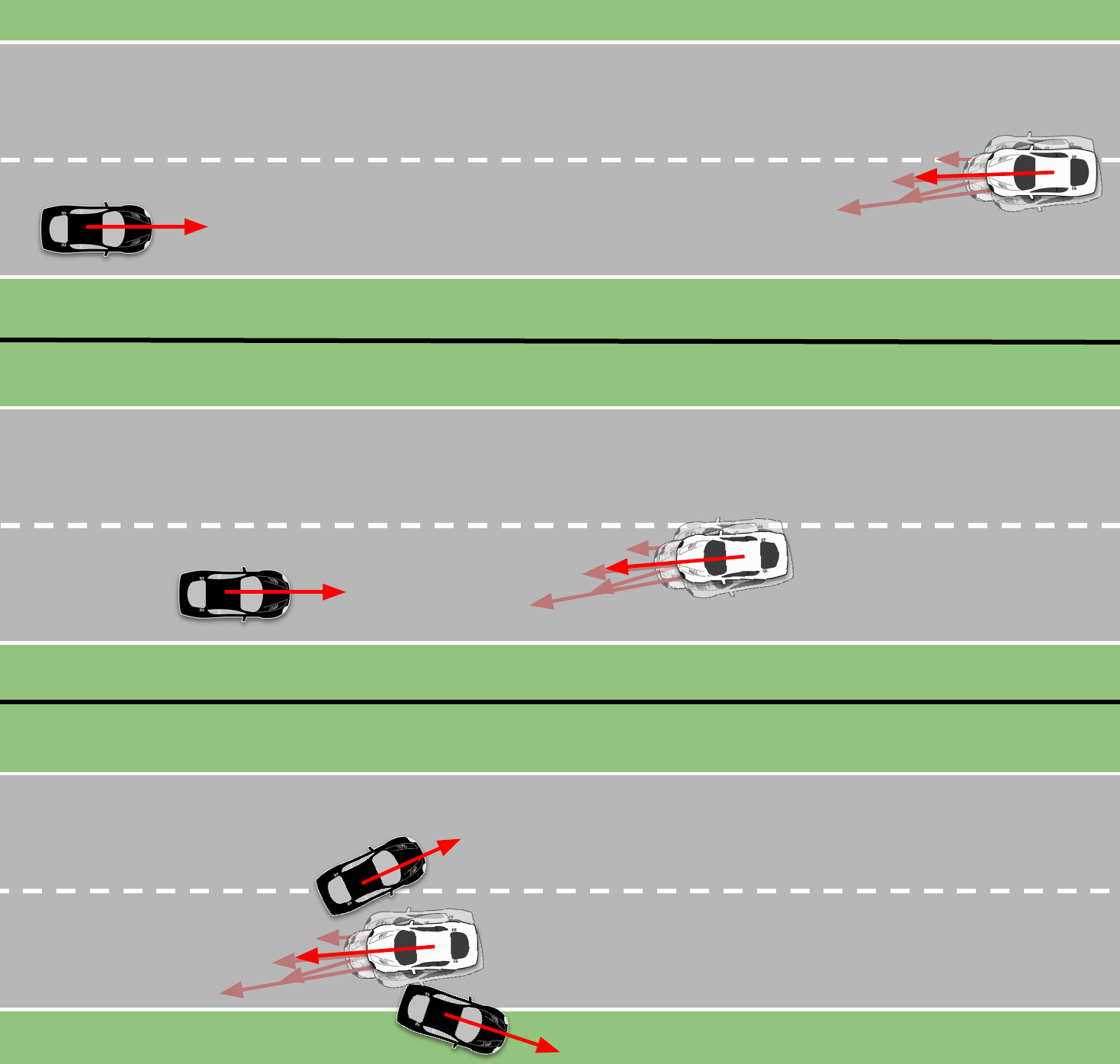

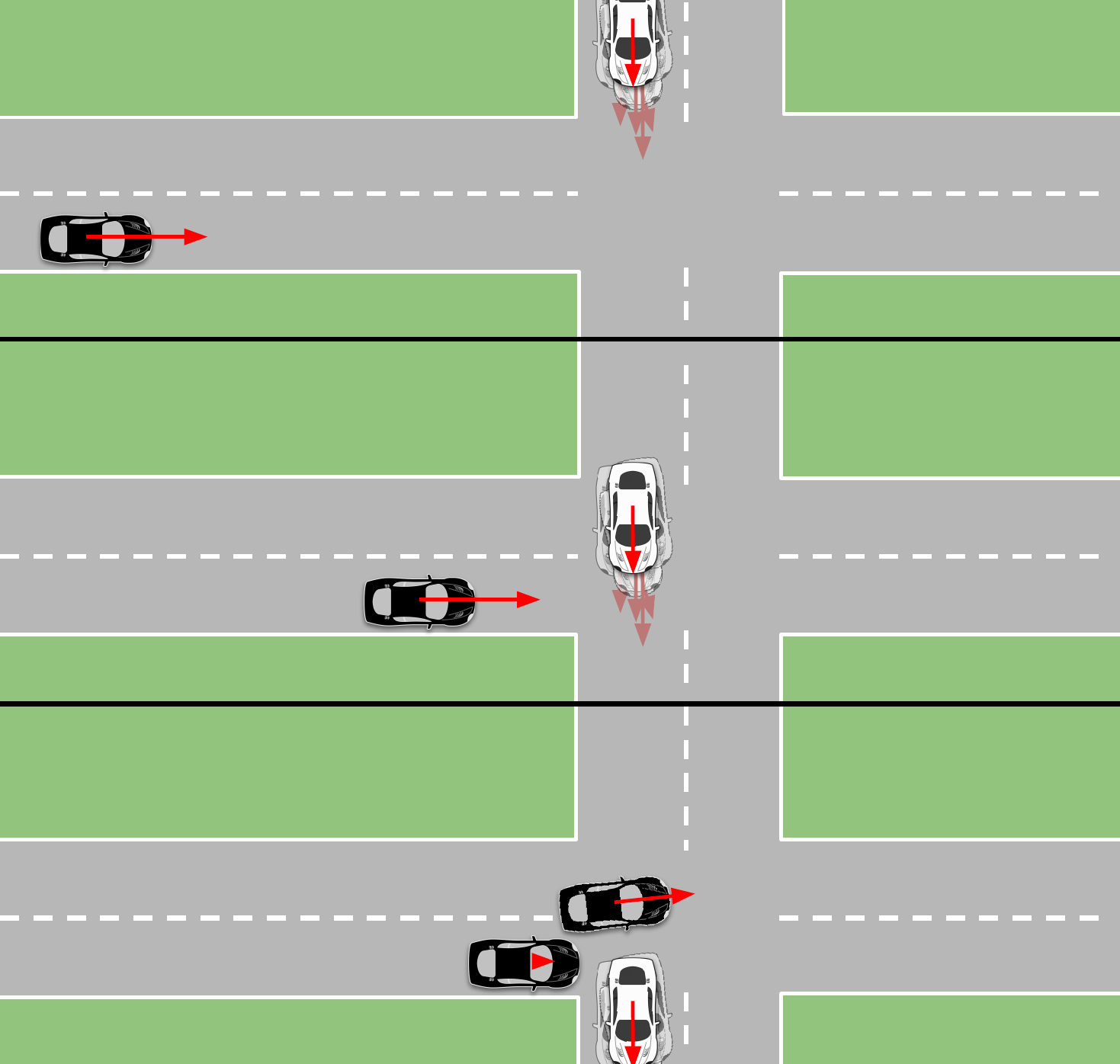

In Fig. 1 below we show an original nuScenes sequence (#0103), followed by examples of our three types of NeuroNCAP scenarios: stationary, frontal, and side. The inserted safety-critical actor has been highlighted for illustration purposes. We can generate hundreds of unique scenarios from each sequence by selecting different actors, jittering their trajectories, and choosing different starting conditions for the ego vehicle.

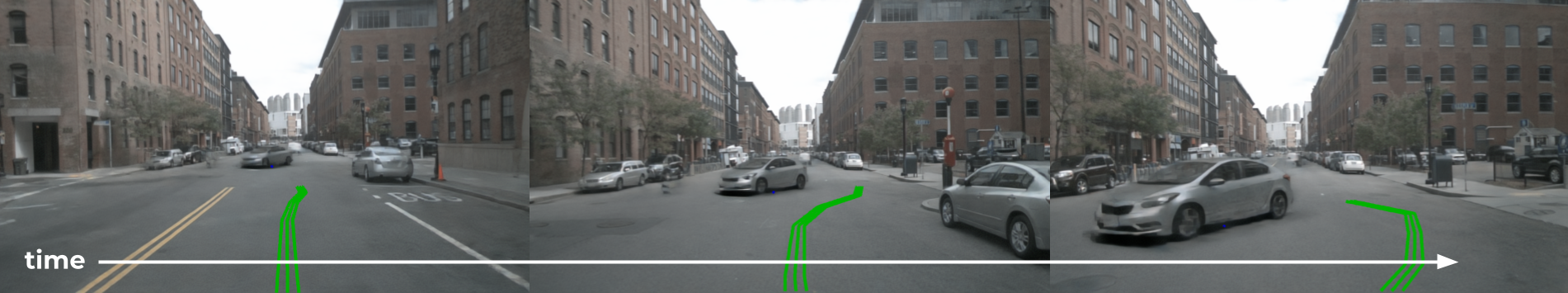

Once a scenario has been instantiated, we can run an entire AD system in closed-loop, as shown in Fig. 2. Here we show an example of UniAD

Closed-loop Simulator

Our closed-loop simulator repeatedly performs four steps (illustrated below):

- The Neural Renderer generates high-quality sensor data. The renderer is trained on a sequence of real-world driving data.

- The AD Model predicts a future ego-vehicle trajectory given the rendered camera input and the ego-vehicle state.

- The Controller converts the planned trajectory to a set of acceleration and steering signals.

- The Vehicle Model propagates the ego-state forward in time, based on the control inputs.

NeuroNCAP Evaluation Protocol

In contrast to common evaluation practices – i.e., averaging performance across large-scale datasets – NeuroNCAP instead focuses on a small set of carefully designed safety-critical scenarios, designed such that any model that cannot successfully handle all of them, should be considered unsafe.

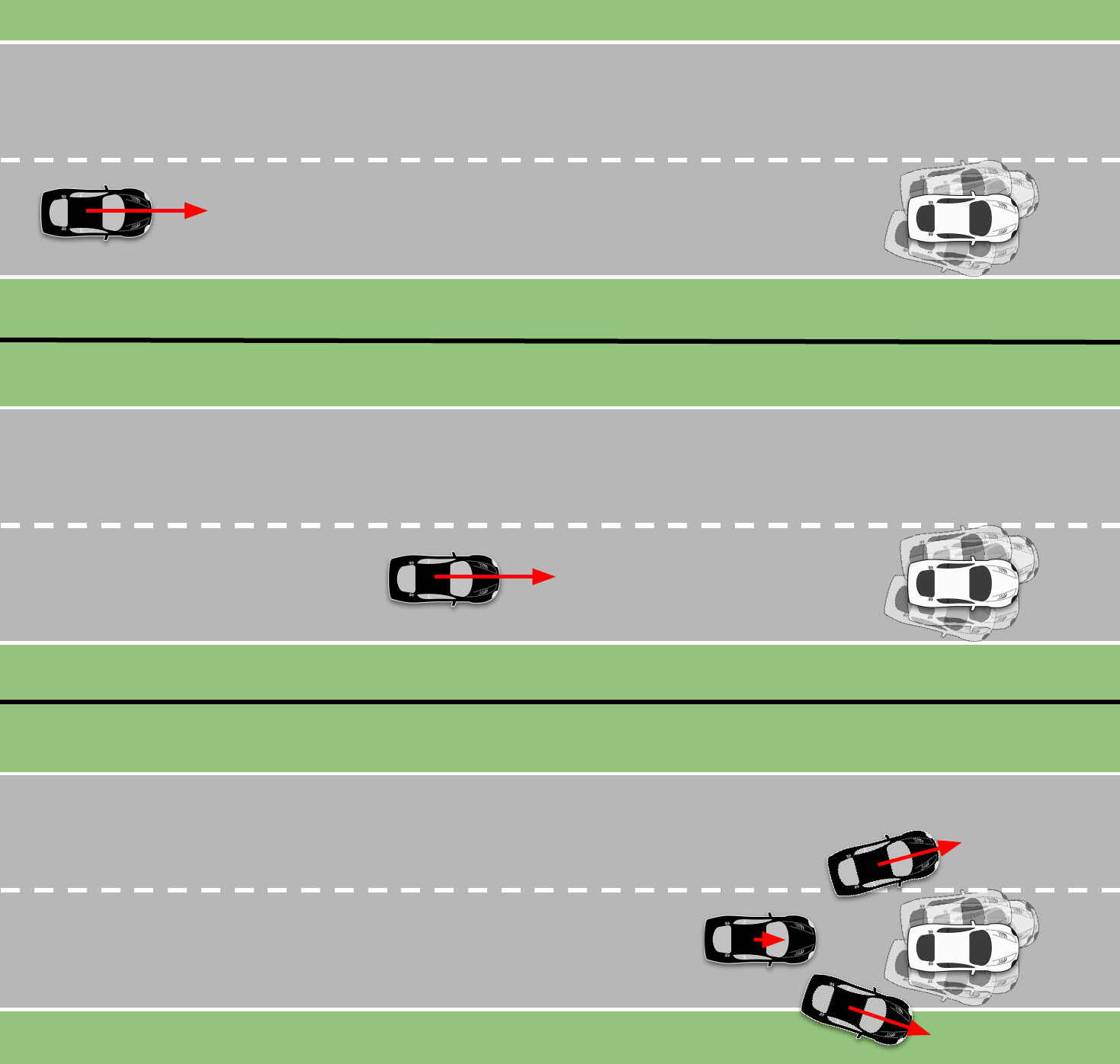

Creating safety-critical scenarios

To create safety-critical, we have taken inspiration from the industry standard Euro NCAP testing and define three types of scenarios, each characterized by the behavior of the actor that we are about to collide with: stationary, frontal, and side.

In the stationary scenario, the actor is stationary in the current ego-vehicle trajectory, while in the frontal and side scenarios, the actor is moving towards the ego vehicle in a frontal or side collision course, respectively. In the stationary and side scenarios, a collision can be avoided either by stopping or by steering, while in the frontal scenario, a collision can only be avoided by steering.

Creating photo-realistic safety-critical scenarios

From a sequence of real-world driving data, we train a neural rendering model

Creating countless photo-realistic safety-critical scenarios

We can generate multiple random instances from the same scenario by simply jittering attributes such as position and rotation, as well as the actor itself. Below we show three random runs of the same stationary scenario. Note that in the first two, we have maintained the same actor, while in the third we have changed the actor. Note that all of these scenarios are run in closed-loop, with UniAD

Scoring safety-critical scenarios

For each scenario, a score is computed. A full score is achieved only by completely avoiding collision. Partial scores are awarded by successfully reducing the impact velocity. In spirit of the 5-star Euro NCAP rating system we compute the NeuroNCAP score (NNS) as

where \(v_i\) is the impact speed as the magnitude of relative velocity between the ego-vehicle and the colliding actor, and \(v_r\) is the reference impact speed that would occur if no action is performed. In other words, the score corresponds to a 5-star rating if a collision is entirely avoided, and otherwise, the rating is linearly decreased from four to zero stars at (or exceeding) the reference impact speed.

Evaluating state-of-the-art models in NeuroNCAP

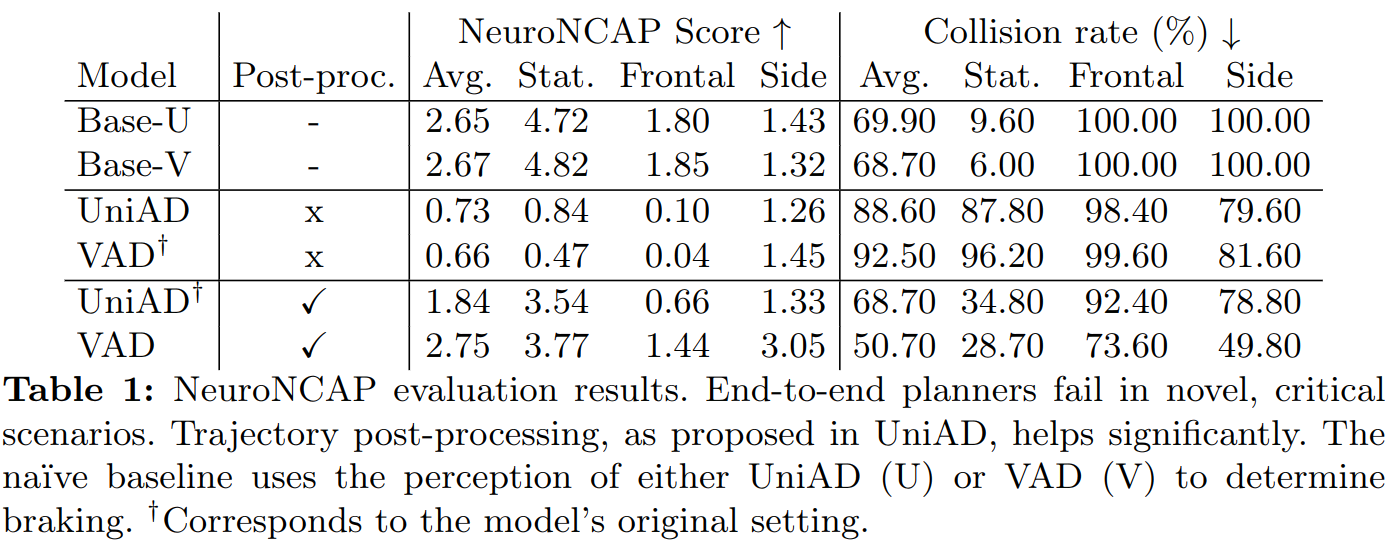

We evaluate the performance of two state-of-the-art end-to-end planners, UniAD

Below we show an example of a frontal collision scenario, where both UniAD and VAD are run both with and without the post-processing step. In this case, all fail except for UniAD with post-processing.

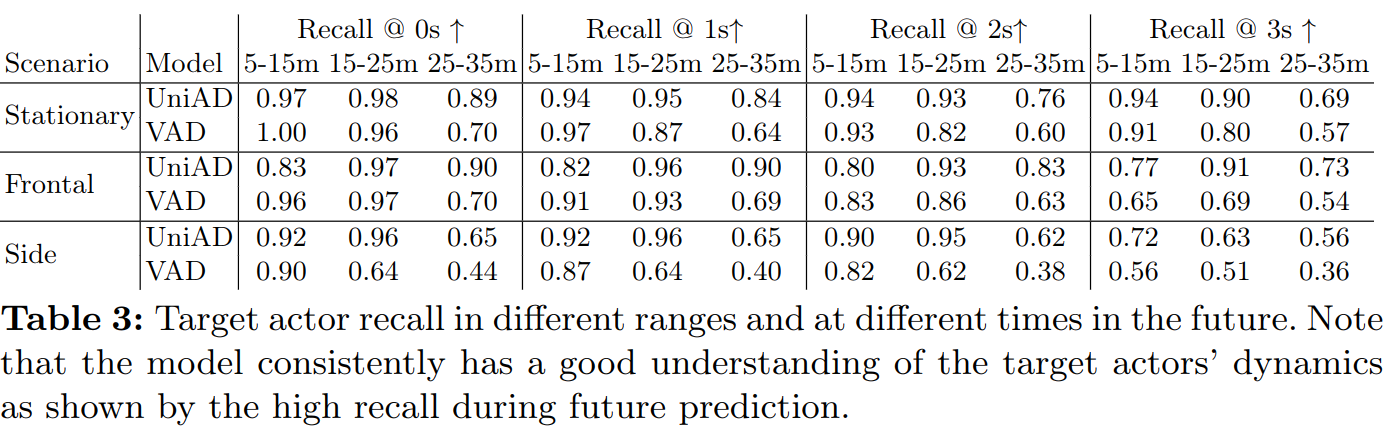

One might think that the reason that the models perform so poorly is due to a sim-to-real gap. To investigate this, we performed a sim-to-real study, where we made use of the auxiliary outputs (detected and forecasted objects), and computed how well the models perceive the safety-critical actor. As shown in the table below, the models accurately detect and forecast the safety-critical actor, but still fail to avoid the collision.

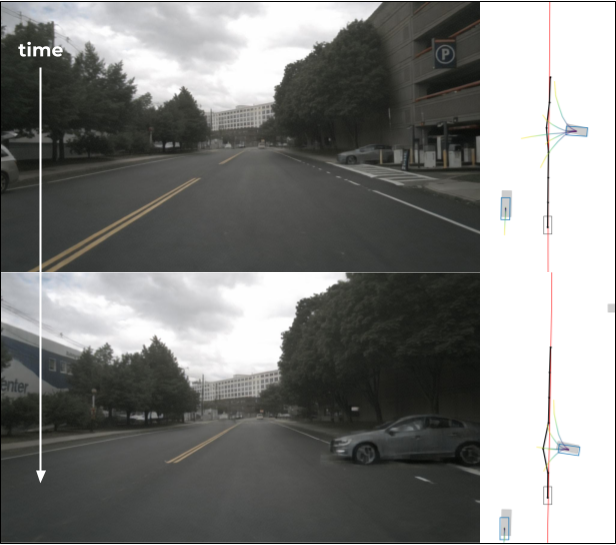

In the figure below we show a qualitative example of this. In this side-scenario, UniAD correctly detects and forecasts the actor. As seen, it predicts many possible trajectories for the actor, several of which are driving straight into our trajectory. Despite this, it fails to do a safe maneuver, such as breaking or steering with sufficient safety margin, to avoid the collision.

BibTeX

@inproceedings{ljungbergh2024neuroncap,

author="Ljungbergh, William and Tonderski, Adam and Johnander, Joakim and Caesar, Holger and {\AA}st{\"o}m, Kalle and Felsberg, Michael and Petersson, Christoffer",

title="NeuroNCAP: Photorealistic Closed-Loop Safety Testing for Autonomous Driving",

booktitle={European Conference on Computer Vision},

year="2025",

pages="161--177",

isbn="978-3-031-73404-5",

organization = {Springer}

}