IDSplat: Instance-Decomposed 3D Gaussian Splatting for Driving Scenes

Abstract

Reconstructing dynamic driving scenes is essential for developing autonomous systems through sensor-realistic simulation. Although recent methods achieve high-fidelity reconstructions, they either rely on costly human annotations for object trajectories or use time-varying representations without explicit object-level decomposition, leading to intertwined static and dynamic elements that hinder scene separation. We present IDSplat, a self-supervised 3D Gaussian Splatting framework that reconstructs dynamic scenes with explicit instance decomposition and learnable motion trajectories, without requiring human annotations. Our key insight is to model dynamic objects as coherent instances undergoing rigid transformations, rather than unstructured time-varying primitives. For instance decomposition, we employ zero-shot, language-grounded video tracking anchored to 3D using lidar, and estimate consistent poses via feature correspondences. We introduce a coordinated-turn smoothing scheme to obtain temporally and physically consistent motion trajectories, mitigating pose misalignments and tracking failures, followed by joint optimization of object poses and Gaussian parameters. Experiments on the Waymo Open Dataset demonstrate that our method achieves competitive reconstruction quality while maintaining instance-level decomposition and generalizes across diverse sequences and view densities without retraining, making it practical for large-scale autonomous driving applications. Code released at https://github.com/zenseact/idsplat.

Method

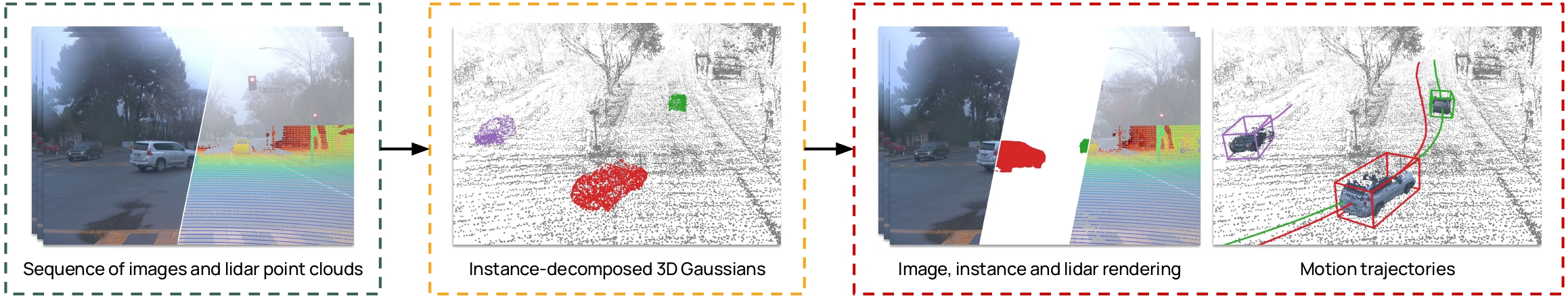

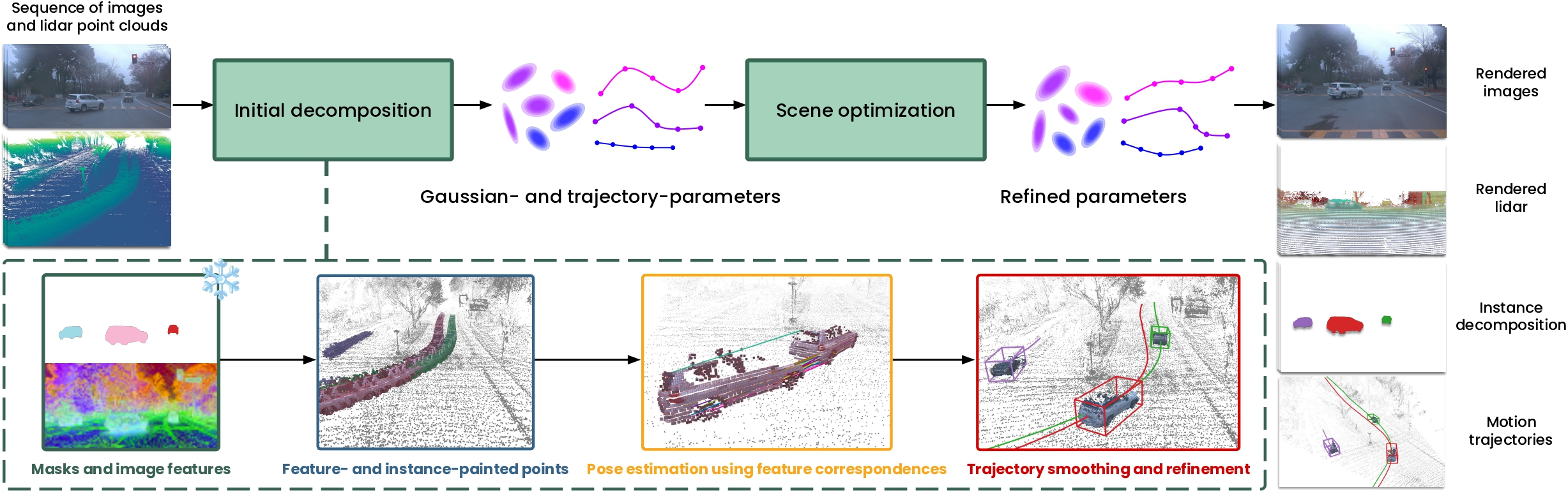

IDSplat takes a sequence of images and lidar point clouds as input and produces a fully instance-decomposed 3D reconstruction of the scene which provides images, lidar, instance masks, and object trajectories. The method proceeds in two stages. First, an initial scene decomposition is obtained by lifting zero-shot 2D instance masks into 3D using lidar, estimating object trajectories via registration using features from DINOv3, and refining those trajectories through iterative coordinated-turn smoothing. Second, all Gaussian and trajectory parameters are then optimized jointly.

Instance decomposition

To decompose the scene into object instances without any human annotations, IDSplat uses Grounded-SAM-2 to generate instance masks in a zero-shot manner with language prompts. These 2D masks are lifted into 3D by projecting lidar points onto the image plane and assigning them the corresponding instance IDs. Initial object poses are estimated by registering each instance’s lidar points across frames using RANSAC.

Trajectory smoothing

Pose estimates from RANSAC are inevitably noisy as instance masks may be missing in some frames and lidar observations can be sparse or incomplete. To obtain reasonable trajectories, IDSplat refines the initial poses through a coordinated turn (CT) smoothing process. The final joint optimization further refines the trajectories using the rendering losses.

Results

We evaluate IDSplat across four experimental settings, each following the setup of a prior work (DeSiRe-GS, AD-GS, CoDa-4DGS, and SplatFlow). Comparing against annotation-free methods, IDSplat achieves state-of-the-art performance across all settings, and even matches annotation-based baselines on several metrics.

| Method | Anno. free | PSNR ↑ | SSIM ↑ | LPIPS ↓ | DPSNR ↑ | |

|---|---|---|---|---|---|---|

DeSiRe-GS setting |

MARS | ✗ | 26.61 | — | — | 22.21 |

| SplatAD | ✗ | 30.80 | 0.900 | 0.160 | 28.97 | |

| PVG | ✓ | 29.77 | — | — | 27.19 | |

| EmerNeRF | ✓ | 25.14 | — | — | 23.49 | |

| S3Gaussian | ✓ | 27.44 | — | — | 22.92 | |

| DeSiRe-GS | ✓ | 28.76 | 0.873 | 0.193 | 26.26 | |

| IDSplat (ours) | ✓ | 30.83 | 0.900 | 0.160 | 29.20 | |

AD-GS setting |

StreetGS | ✗ | 33.97 | 0.926 | 0.227 | 28.50 |

| 4DGS | ✗ | 34.64 | 0.940 | 0.244 | 29.77 | |

| SplatAD | ✗ | 34.24 | 0.925 | 0.246 | 29.68 | |

| SplatAD (CasTrack) | ✗ | 32.52 | 0.924 | 0.241 | 25.31 | |

| PVG | ✓ | 29.54 | 0.895 | 0.266 | 21.56 | |

| EmerNeRF | ✓ | 31.32 | 0.881 | 0.301 | 21.80 | |

| Grid4D | ✓ | 32.19 | 0.921 | 0.253 | 22.77 | |

| AD-GS | ✓ | 33.91 | 0.927 | 0.228 | 27.41 | |

| IDSplat (ours) | ✓ | 34.59 | 0.929 | 0.235 | 29.63 | |

CoDa-4DGS Setting |

CoDa-4DGS | ✓ | 28.66 | 0.900 | 0.058 | — |

| IDSplat (ours) | ✓ | 30.50 | 0.875 | 0.090 | — | |

SplatFlow Setting |

SplatFlow | ✓ | 28.71 | 0.874 | 0.239 | — |

| IDSplat (ours) | ✓ | 29.95 | 0.879 | 0.183 | — | |

NVS results across multiple evaluation settings. 1st 2nd 3rd among annotation-free methods.

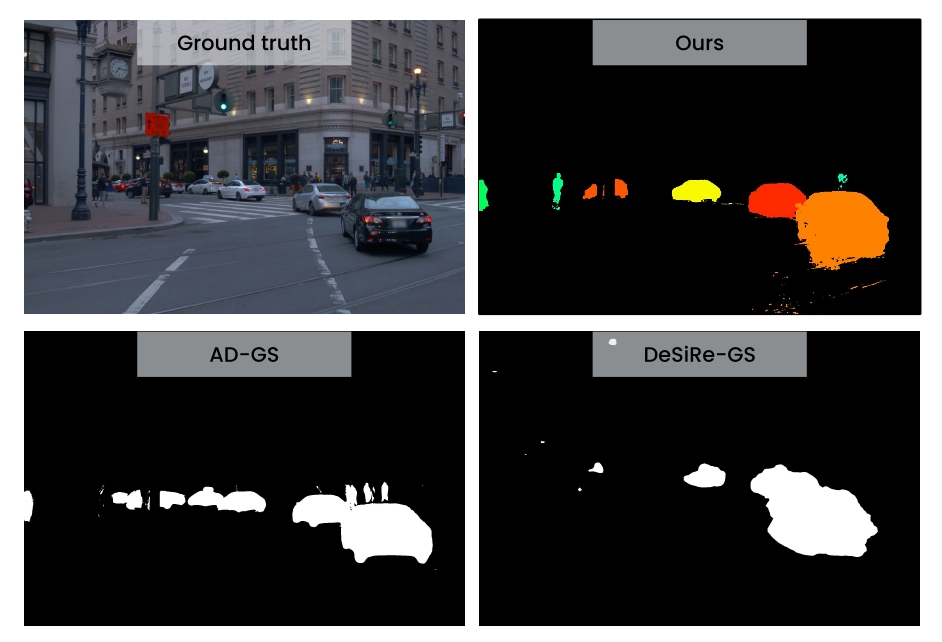

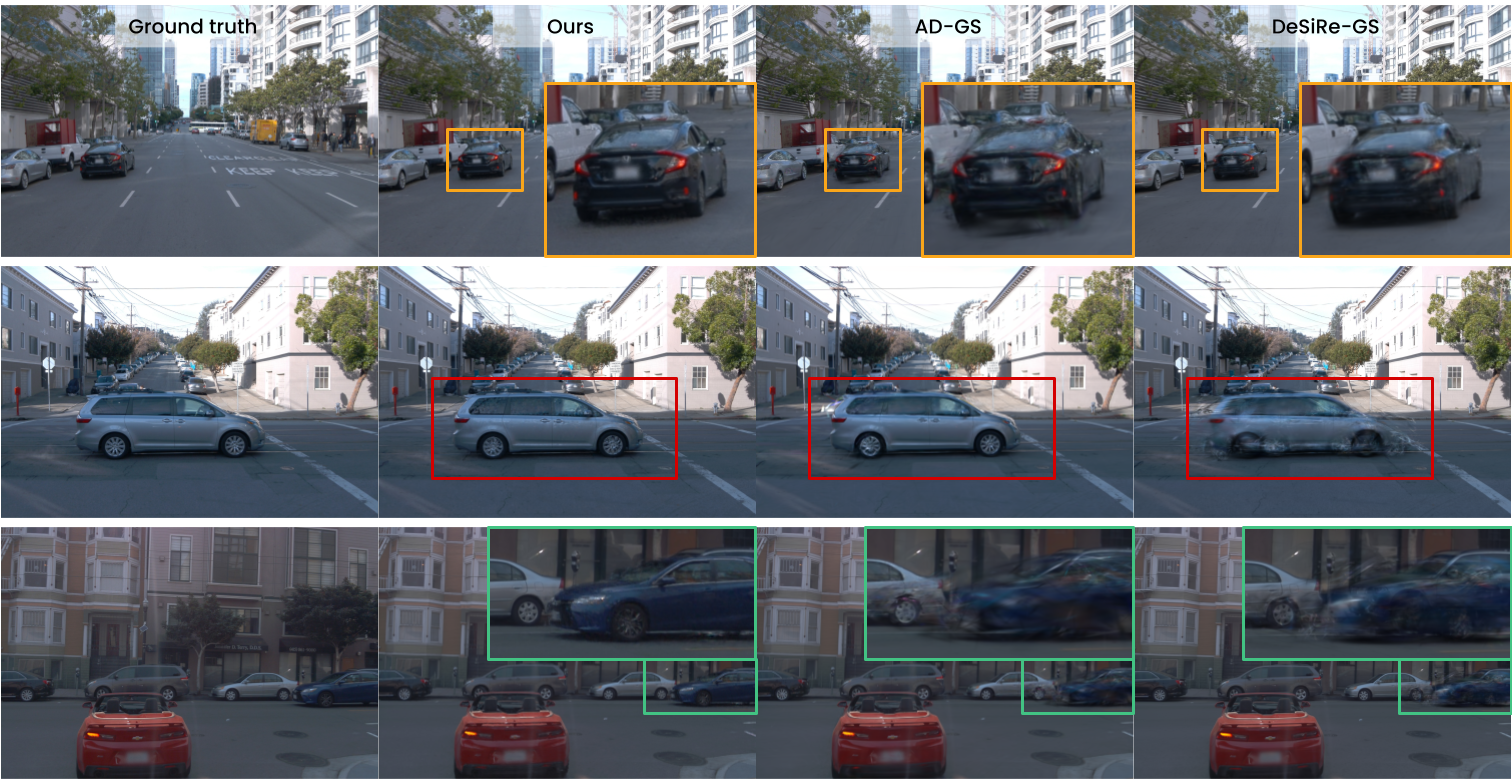

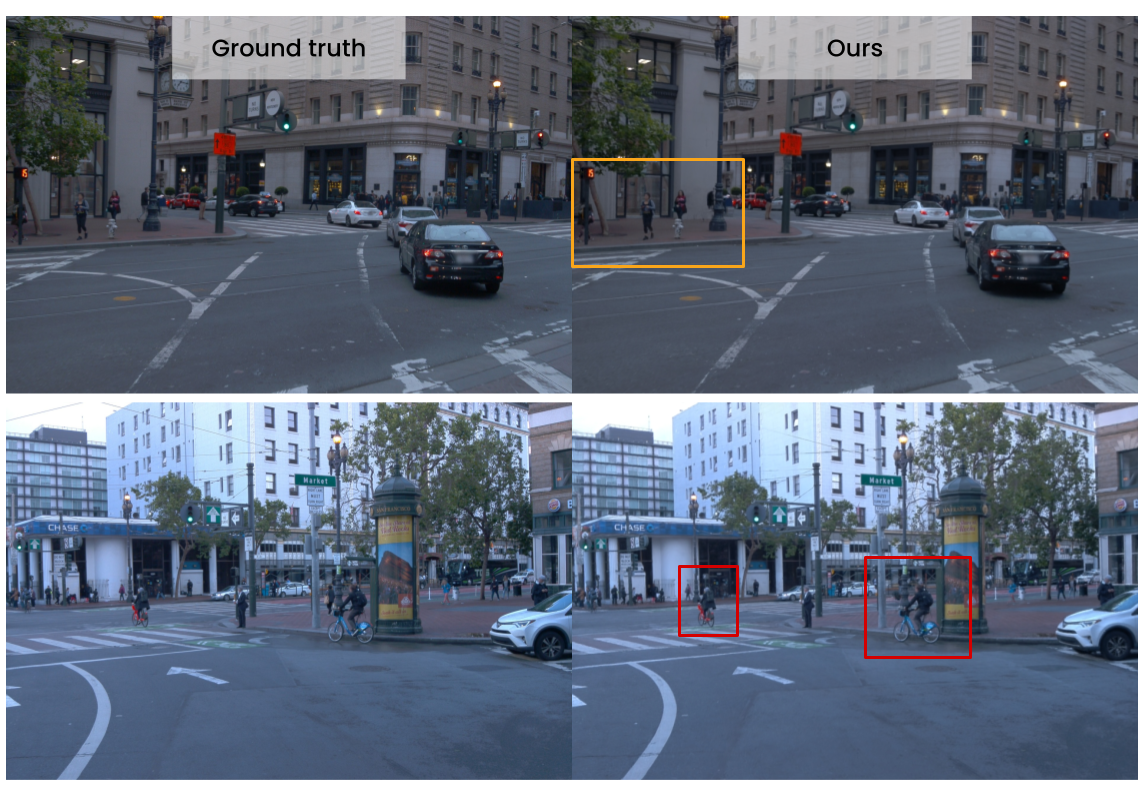

The figure below shows a qualitative comparison of our method with DeSiRe-GS and AD-GS on the the dynamic NOTR subset of Waymo Open Dataset, where all methods were trained with 75% of frames (3 out of 4 views).

Pedestrians and cyclists are also rendered with high fidelity despite being modelled as rigid.

To assess the robustness of our method to different training view densities, we present DPSNR (PSNR in dynamic regions) evaluated for dynamic NOTR. Even with sparse training frames, IDSplat achieves high DPSNR, which highlights the effectiveness of our instance-decomposed model of dynamic objects compared to the self-supervised 3DGS SOTA.

.png)

This is further illustrated with qualitative examples of novel view synthesis for different viewpoint densities.

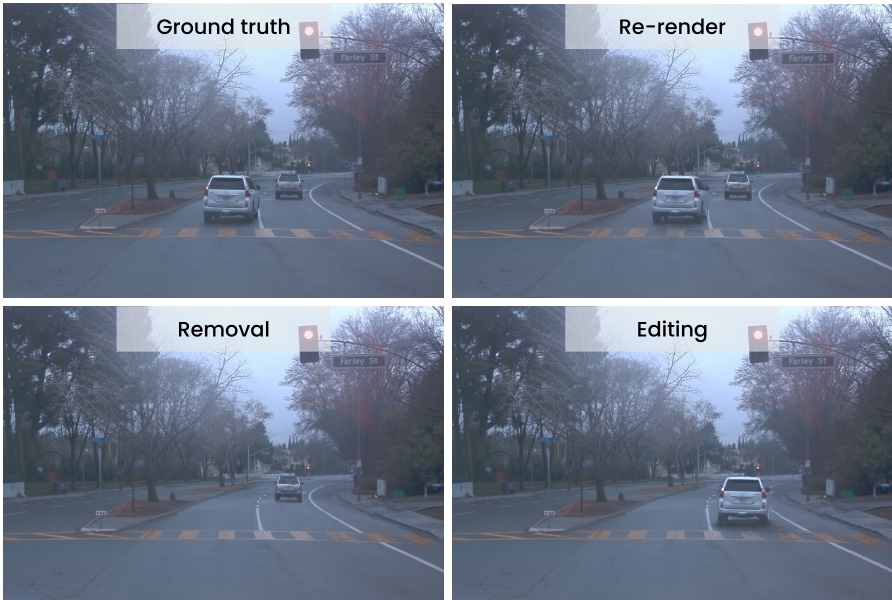

Scene manipulation

Because each dynamic object in IDSplat is represented as an independent instance, the reconstructed scene is fully decomposable and editable. Individual objects can be removed from the scene entirely, repositioned along new trajectories, or replaced, without re-optimizing the background or other instances. This makes IDSplat directly useful for closed-loop simulation. Collected data can be transformed into safety-critical scenarios simply by modifying actor trajectories. Combined with the method’s zero-shot generalization across datasets and object classes, this positions IDSplat as a significant step towards scalable autonomous driving simulation.

BibTeX

@misc{lindström2026idsplatinstancedecomposed3dgaussian,

title={IDSplat: Instance-Decomposed 3D Gaussian Splatting for Driving Scenes},

author={Carl Lindström and Mahan Rafidashti and Maryam Fatemi and Lars Hammarstrand and Martin R. Oswald and Lennart Svensson},

year={2026},

eprint={2511.19235},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2511.19235},

}